One of the best-engineered AI systems I use every day lives in my dishwasher.

It has a little sensor that checks how dirty the water is at each stage of the wash, and it adjusts the cycle in real time — longer if the dishes are caked, shorter if they're not, hotter if there's grease. It doesn't talk to the cloud. It doesn't need a wifi password. It just does its job.

That's edge AI, and once you start looking for it, you notice it everywhere.

What "edge" actually means

"Edge AI" sounds vaguely futuristic, but the definition is mundane: the AI model runs on the device that's collecting the data, rather than on a server somewhere else.

Your smartphone does this when it blurs the background behind your face on a video call. Your doorbell does this when it decides whether the movement outside is a person or a passing cat. Your car does this when it keeps you in lane.

None of these send video to the cloud to be analysed. They do the thinking locally, on a chip the size of a postage stamp.

Why it matters

There are four big reasons to run AI at the edge:

Latency. Cloud round-trips take 50–500ms. Local inference takes single-digit milliseconds. For anything that has to react in real time — a car's emergency brake, a factory's defect detection, a hearing aid's noise cancellation — the cloud is simply too slow.

Privacy. Data that never leaves the device can't be leaked. Your phone's face-unlock system is better-designed than any cloud authentication because your face literally never goes anywhere.

Resilience. Edge systems keep working when the network dies. If your patient monitor needs a 4G connection to decide whether to alarm, you have a worse monitor.

Cost. Sending a video stream to the cloud to have it analysed is expensive. Having a local chip do it costs pennies in electricity.

What's made it possible

Three quiet revolutions, none of them splashy, have converged over the last decade:

-

Model distillation. Researchers figured out how to shrink a neural network by 10-100× while keeping most of its accuracy. The version of an image classifier that runs on a phone is a tiny cousin of the version that ran on a rack of GPUs.

-

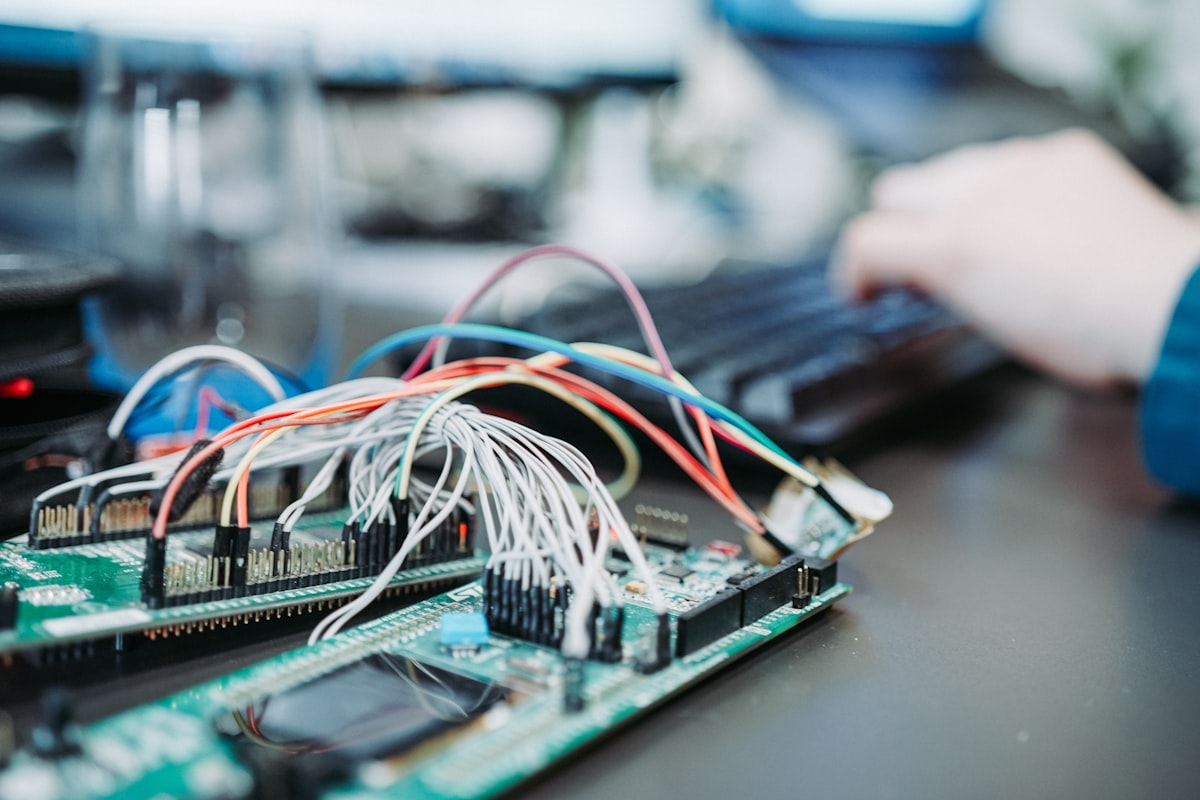

Specialised chips. Modern phones, microcontrollers, and even batteries ship with dedicated neural accelerators. They're dramatically more power-efficient than a general-purpose CPU at running AI workloads.

-

Frameworks that actually work. TensorFlow Lite, ONNX Runtime, CoreML, and PyTorch Mobile have gotten good enough that porting a trained model to an edge device is a day's work, not a month's.

Where it's making a difference

Some of the prettiest edge AI deployments I've seen recently:

- Maternal health in rural Kenya. A handheld ultrasound probe with an on-device classifier spots high-risk pregnancies without needing a cloud connection. The clinic doesn't have reliable internet. The device doesn't care.

- Conservation acoustics. Solar-powered audio sensors in the Amazon rainforest listen for chainsaws and gunshots, run a local model to classify the sound, and only wake up their satellite uplink if they hear something worth reporting.

- Warehouse safety. Camera-on-a-chip systems above forklifts spot when a pedestrian enters a dangerous zone, and flash a warning — locally, in real time, no server in the loop.

A practical note if you're building at the edge

Three things to budget for that catch teams by surprise:

- The edge is diverse. Your model will run on chips with wildly different capabilities. Plan for it.

- Updates are hard. Pushing a new model to a million field devices is an engineering problem in its own right. Start thinking about it before you ship.

- Observability is different. You can't log everything; bandwidth is precious. Design telemetry carefully — enough to know the model is working, not so much that you drain the battery.

Why I keep banging on about this

The world's getting quieter and smarter at the same time. Good edge AI is invisible — the dishwasher that washes properly, the hearing aid that filters restaurants, the camera that blurs your face when you want it to. None of that will trend on Twitter. All of it makes life better.

If you're thinking about moving AI out of the cloud and onto the device, we'd love to help you think it through. Let's chat.