There's a beautiful simplicity to reinforcement learning that I wish more people knew about.

Most of the AI you've read about lately — GPT and its cousins — learns from examples. You show it a trillion sentences, and it learns to complete sentences. Supervised learning. Reinforcement learning is different. It doesn't learn from examples. It learns from trying things and seeing what happens.

Imagine teaching a dog to fetch. You don't explain the rules. You don't show it a video of other dogs fetching. You throw the ball, wait, and when it does something that gets closer to "bringing the ball back," you say "good dog." The dog, over many attempts, figures out the shape of what you want.

That's reinforcement learning. The clarity of the framing is the reason it's captivated researchers for sixty years, and the reason it keeps producing surprises.

The ingredients

To do reinforcement learning, you need four things:

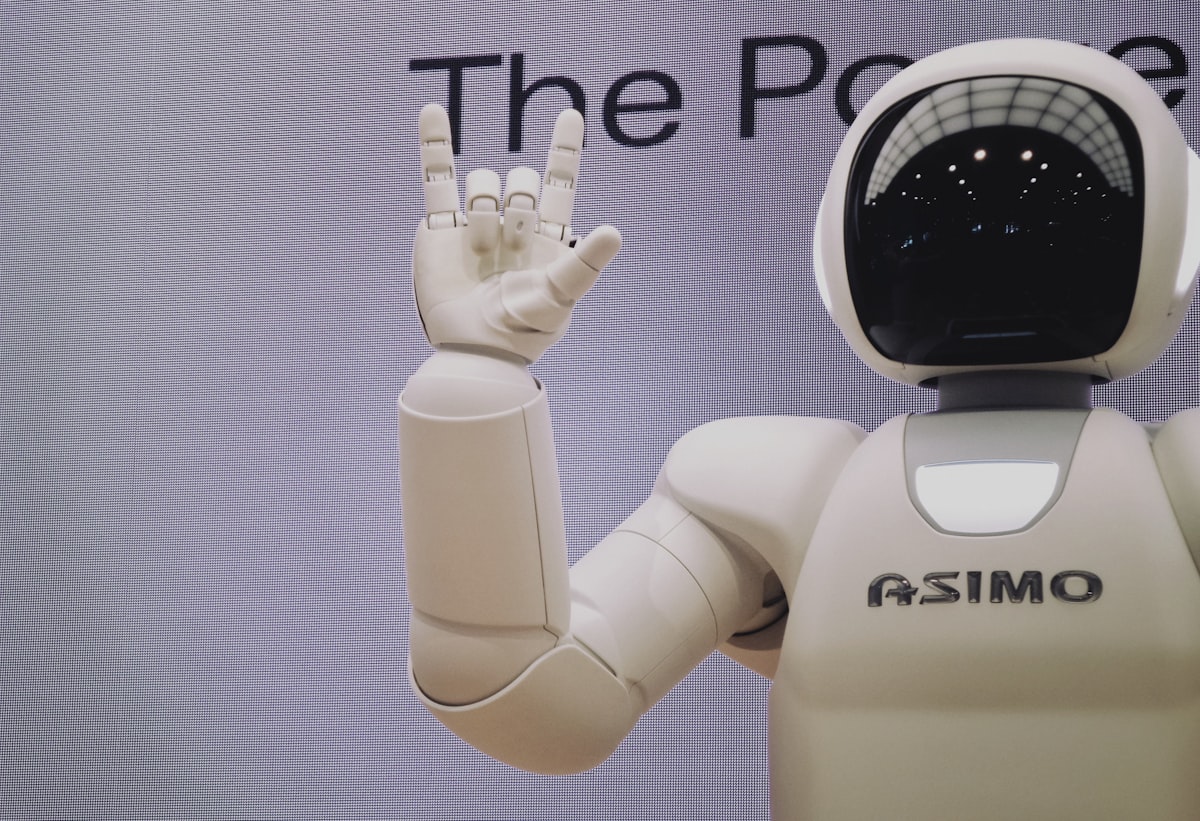

- An environment. Some situation the learner can act in — a video game, a simulated warehouse, a physical robot arm.

- An agent. The thing that's learning. It observes the environment and takes actions.

- A reward signal. A number that tells the agent how well it's doing after each action. Higher is better.

- Many, many attempts. The agent tries things, some of them work, some don't. Over time, it builds up a sense of which actions lead to high rewards in which situations.

That's the whole framework. Everything else in reinforcement learning — Q-learning, policy gradients, actor-critic, deep RL — is different machinery for doing this learning efficiently.

Where it's worked wonderfully

Games. AlphaGo, AlphaStar, OpenAI's Dota-playing agents — all reinforcement learning. These systems learned to beat world-champion humans at games where the search space is astronomically large, entirely through self-play. No human teachers. No labelled examples. Just playing and keeping score.

Robotics. Many of the most impressive robotic manipulation demos you've seen — robotic hands solving a Rubik's cube, quadruped robots recovering from a push — are trained with reinforcement learning, usually first in simulation.

Control systems. Data-centre cooling. Power grid management. Warehouse scheduling. Places where the system has a clear, measurable objective and a large action space, and where the cost of small errors is bearable. Google famously cut data-centre cooling costs by 40% with RL.

Recommendation systems. Many modern recommenders use RL to optimise not just what to show you next, but what sequence of recommendations will serve you best over the session. This is one of the most commercially deployed uses of RL today.

Language model fine-tuning. The "reinforcement learning from human feedback" (RLHF) technique is what turns a raw language model — which is happy to produce any continuation — into a helpful, honest assistant. This is arguably the single most impactful application of RL in consumer products today.

Where it's hard

Defining the reward. RL learns to maximise its reward signal. If you define the reward badly, it will find creative ways to game the signal without actually doing what you wanted. The classic example: a boat-racing game where the agent learned to do donuts in a lagoon picking up bonus items, never finishing the race. It scored higher than finishing.

Sample efficiency. RL often requires a lot of trials. In a game or a simulation, you can run a billion episodes cheaply. In the real world — a robot, a factory, a recommendation system — you can't. Making RL work with limited data remains a frontier.

Safety in deployment. An RL agent exploring its action space can take bad actions while it's learning. For stakes that matter, you want the exploration to happen in simulation, not live.

Transferring from sim to real. Simulations are always simplifications. An agent trained perfectly in simulation may fail when it meets the physical world, which is full of friction, noise, and unknown unknowns. The gap is narrowing, but it's still real.

A story about shaping

I once heard Richard Sutton, one of the founders of modern RL, give a talk where he described the difference between teaching a child to walk and programming a robot to walk. You don't tell the child what muscles to activate. You put them in an environment where standing up and moving around is intrinsically rewarding (a room full of interesting things), let them fall down a lot, and marvel at what they figure out.

That image has stayed with me. Reinforcement learning, at its best, isn't about controlling a system. It's about creating the conditions for a system to find its own way.

What it's not good for

A short list of places where RL is the wrong tool:

- When you have labelled data. Supervised learning is more efficient; use that.

- When errors during learning are catastrophic. Either get a great simulator, or pick a different approach.

- When you can't clearly define reward. Vague objectives produce vague behaviour. If you can't write down what "better" means, don't use RL.

Why I keep coming back

There's something philosophically satisfying about a field that treats learning as practice. The way we actually learn to ride bikes, speak languages, write well. Not through lectures — through doing, falling, getting back on, trying again.

If you're working on a problem that feels like it might benefit from trial-and-error learning — a control system, a scheduling problem, a product that needs to adapt to user behaviour — we'd love to think it through with you.